Course:CPSC522/Generic Aspect-based Aggregation of Sentiments

Primary Author: Prithu Banerjee

Generic Aspect-based Aggregation of Sentiments

Author: Prithu Banerjee

Builds on: Uses Bayesian Inference to aggregate sentiments mined by Sentiment Analysers

Abstract

With the onset of e-commerce and other applications, reviews of products and services have become available in plenty. Hence, researchers have been investing lots of effort to understand what those reviews say about certain things. One way to characterize this is to identify each aspect that has been spoken about in the reviews and aggregates the sentiments expressed for each of the aspects to understand the overall sentiment for the product. One consequence of this approach is to identify how each of the aspects play a role towards computing the aggregated sentiment. State-of-the-art works rely on tree based ontologies to understand that. Positions of aspects in the hierarchies determine the weight of influence and the parent-child relation determines the interplay of influences. However, we believe this model is much restrictive. Not all relations of influences can be captured strictly through trees. A graph or DAG (Directed Acyclic Graph) is thus more expressive. It also states that, having positions strictly determining the weights is not necessarily right. Hence, we plan to see this as a bayesian model where our learning goal will be to infer the weights given the aspects that are connected by DAGs. We will compare this technique with tree based aggregation model on labeled data to see if it performs better.

Motivation

A rapid expansion of technology and business growth has seen a shift in merchant paradigm where online marketplaces started playing a dominant role. This popularity of e-commerce sites has also lead to the large-scale availability of reviews and ratings. Hence, it has become essential to correctly mine all these, mostly crowd-sourced, additional information. They not only help other buyers to make more wise buy decisions but also help merchants in lining up their recommendation or logistics strategies. Sentiment analysis is one important aspect of these mining tasks that tries to evaluate different perspectives of consumers based on their online reviews of products and services. Aggregating those sentiments correctly at a product level or at a level of different features/facets of products is useful for these needs. Moreover with the boom of online social networks, we see a lot of people around the globe to express their concerns and comments on different events happening around them.Sentiment analysis can again be used to decipher their aggregated sentiment which is necessary to analyze the public opinion on varieties of issues.

Broader domain of sentiment analysis focuses on finding an automated way to classify people opinion on a certain product/issue into labels such as “good”, “bad”, “neutral” etc. Whereas sentiment aggregation aims at finding the overall polarity of reviews that as stated, is beneficiary to both the consumers of the product/service and the organization providing the service. To illustrate, analysing a comment such as: “the restaurant had a nice ambient and great Thai food. The Chinese soup was also ok but certainly the quality of noodles was below par." about a restaurant visit, a sentiment analyser might label the sentiment for ambience and the Thai food as good, but for the Chinese dish as neutral. The owner can surely leverage this to bolster the Chinese food quality and at the same time, it would help other customers to decide on whether they would like to visit the place for a particular cuisine of interest.

However, there are usually a large number of comments on the web and it’s not feasible to go through every comment to make a decision. Also, the sentence structures could be widely varied which makes it even more difficult to understand. For example, consider the comment “the ambience was good, waitresses were very friendly, but the food sucks!”. It states 2 good features of a restaurant and only 1 bad feature but that single feature, food, is the most important feature of any restaurant. Hence, even though other features such as ambience and service are good, the overall polarity of the comment should be negative. This suggests that just counting the number of good labels and bad labels alone in a review is not sufficient to decide on the overall polarity. Rather it is crucial to understand how individual aspects interact with each other and what sort of influence they carry in determining the overall polarity of the sentiment for the product as a whole.

Background Knowledge

In this section, I will briefly present some background required to understand our proposed framework. Also, I will be zeroing on exactly where the need of our hypothesis can be realized. I will use the following review snippet as a running example to understand the concepts:

the ambience was good, waitresses were very friendly, but thai dishes suck!

Sentiment analysis vs Sentiment Aggregation

Before I present various methods that are currently used in the sentiment aggregation, I would like to point out the subtleties between aggregation and analysis. Sentiment Analysis primarily focuses on finding the exact sentiment expressed in sentences of a review. The goal of an analyser is to tokenize sentences of reviews and then find the sentiment polarity expressed in those tokens. It then maps the subject against which the particular sentiment has been expressed. Several models such as dependency graphs, support vector machine (SVM) -based models etc. have been used for this task. I will give a brief overview in the related work section later. We will use the term "aspect-sentiment" to denote the sentiment values generated by the analyser.

The purpose of aggregation, as the name suggests, is to use these individual aspect sentiments to find the aggregated sentiment of a topic/product. More specifically, while analyser generates sentiments for each of the individual aspects, aggregator intelligently combines those pieces.

Thus in our example snippet, sentiment analyser can identify that ambience and waitress were spoken of in positive sentiment, but thai dish was not.

the ambience was good, waitresses were very friendly, but thai dishes suck!

However, it cannot tell what the overall polarity is and hence comes the need of aggregation. Note that such occurrences could may well span different sentences or different documents too. Hence, aggregation poses challenges on its own.

Ontology and Aggregation

To be able to aggregate meaningfully we need to first understand how different concepts are related to each other. More specifically we need to know that dish and ambience are two concepts related with restaurants. This is achieved by using some domain knowledge expressed in a form of ontology. Ontology is a form of knowledge management and representation that is often used by domain experts for the purpose of knowledge sharing. Two main components of ontologies are:

Concepts/Classes: Represented by nodes and

Relationships: Represented by directed arrows.

For example, Figure 1, left half, shows three concepts "restaurant", "food" and "ambience". The arrow from restaurant to food suggests that they are related. Together these classes and relationships can be combined to assert statements about the real world. We can use an ontology to define real world relationships. In our example: Thai is an instance of the class food. Ontology captures that they are also related to concept food.

A sentiment analyser is believed to spit out sentiments for each such instances. Now, while aggregating the sentiments, ontology is often used to understand the direction of influence. Influence can be inferred in the opposite direction to the direction of arrows seen in the ontology. For example: in the ontology shown, the food has an influence for the restaurant, following the opposite direction in the ontology connecting them (as shown on the right half). Whether the direction can easily be inferred from ontology, the weight of influence is not that straight forward to understand. In literature works often use static approaches. It is often assumed that an ontology is a tree, providing a strict hierarchy between the concepts. This order is leveraged to determine the weights. Thus, as per this approach, both ambience and food have equal weights for the concept restaurant. However as argued earlier, it's not always true in real world. Specifically, food should be a more important factor than ambience in the context of restaurant.

Moreover representing the relationship using tree is restrictive. We believe many interactions can be shared across concepts. We thus propose the relation to be seen as a more general Directed Acyclic Graph (DAG). Note that DAG can be extended to a tree by repeating the nodes, but nonetheless, we shall show next that the DAG model elegantly translates to a bayesian learning framework as well.

Related Work

Agarwal et al. [1] combined Feature-based model and Kernel- based model to classify the sentiments expressed over the popular social media, Twitter. They used a hand annotated dictionary for emoticons labeling and an acronym dictionary for Twitter-specific words and translated foreign words to English. They weighted individual word using Dictionary of Affect in Language (DAL). The overall polarity of the reviews was classified based on Support Vector Machine (SVM) classifier. Popinij and Ghose [2] proposed another approached to classify product reviews based on Ontology tree and SVM classifier. They used Lexical Variation Ontology to accommodate with different forms of a word such as “block” and “blocks”. The major steps can be summarized as follows- Tokenization, Morphological analysis based on Shortest Matching and Longest Matching algorithms, Term Extraction using Apriori algorithm, and finally Verification and transformation. Mukherjee and Joshi [3] tried to analyze how the hierarchical relationship between different attributes of a product and their individual sentiment affect the overall polarity of the review of the product. They used ConceptNet to automatically create product specific ontology tree that describes the hierarchical relationship between different features of a product. ConceptNet is a crowd sourced semantic network providing common-sense knowledge about different words. The knowledge is ordered according to the relevance. The feature specific polarities are aggregated from the ontology tree in a bottom-up manner to determine the overall polarity of the review. Our project is based on this work. Our hypothesis builds on this idea, where instead of hierarchy determining the influence weight, we learn it from the data itself. Moreover our framework provisions the use graphical ontology. We propose this as a bayesian learning objective.

Wu et al. [4] developed an approach to automatically identify product features and expression of opinions and how they are related. We use this for identifying sentiment for each concept occurring in reviews.

Our Proposed Hypothesis

As stated our goal is to build a more generic framework for the aggregation purpose. Towards that we want to test the following two hypothesis:

- Is it possible to learn how the concepts influence each other from the data?

- Does a graph based model provide a generic framework for learning the influences?

Proposed framework

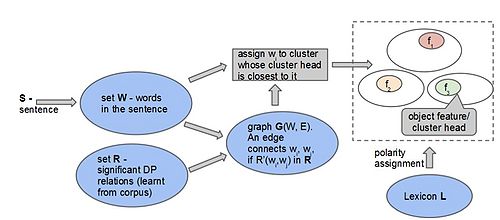

The end-to-end flow is shown in figure 2 here.

It contains an offline learning phase and then the learnt model is used for online inference. The input to the offline learning in an ontology which is represented as a DAG and raw reviews. We first use a sentiment analyser to identify each of the concepts that are talked about in each review and their corresponding sentiments. Additionally, we annotate the overall polarity of the review as well. This annotated reviews are used to train the weights of influence for each concepts using a bayesian framework. The learnt model is then used in online sentiment computation.

The online model, on the other hand, takes only a raw review as input. It again uses sentiment analyser to label each concept and their individual polarities. Then it uses the pre-trained model to use the weights and compute the aggregated sentiment. The aggregated sentiment is comapred with a human labeled aggregated sentiment to vaidate its accuracy. In the following sections, we present each of the steps in more detail.

Annotation

Note that we use two types of annotations in the flow.

Annotation by sentiment analyser: As stated earlier aggregator builds on these individual sentiments identified by analyser.

Manual annotation: This annotation refers to the aggregated "true" sentiment of a review. We use human-annotation here and this serves as ground truth to us. We compare performance of our model and baselines against this annotation.

Bayesian Inference

A bayesian network can be seen as a probabilistic graphical model that models a set of random variables and their conditional dependencies using a directed acyclic graph. The model can be factorized into its conditional independencies. The nice thing about bayesian learning is that there are many efficient inference algorithms for this framework. Parameters of the local conditional probability distributions can be learned using parameter estimation techniques like MLE or E-M.

In our settings each of the concepts can be seen as a random variable and edge representing their relatedness. Thus, the nodes correspond to the sentiment for an aspect and directed edges model the influence between them. Such a bayesnet captures not only hierarchical but also functional and other domain-specific relationships. The problem of predicting the sentiment of a target entity translates to performing inference in the network. In addition this framework also makes it possible to incorporate prior knowledge.

Figure 3 shows a particular case of such inference goal. The nodes having no incoming edge does not depend on any concepts. Hence, their corresponding instances are spat by sentiment analyser. Then for all other nodes, the particular node can be seen as the dependent node of all other directly connected nodes. Our aim of learning is to infer the probability

Aspect Sentiment Annotation

As stated earlier, our hypothesis builds on the output of sentiment analyser. So note that this annotation is not part of our hypothesis, but for the sake of completeness, we will briefly highlight the process.

For any sentence, we first extract the words using a tokenizer. Then a WordNet based similarity measure used to map a noun phrase to an aspect in the ontology. Then we search nearby sentiment carrying lexicons, to find the polarity of sentiment expressed for each identified aspects in a sentence. The process is described in the figure 4 below.

Experiments

In this section, we describe the experiments we have performed to test the performance of above-mentioned framework. We perform our experiments on a restaurant dataset; a brief overview of the data is presented next.

The Ontology and the reviews

We use the restaurant ontology available publicly: Restaurant Ontology. Figure 5 below represents the snapshot of a portion of the ontology.

As can be seen, there are many dependencies that do not follow a tree-based hierarchy. The same had been noted in the work of Mukherjee et al [3]. However for their purpose they pruned out many edges and hence lost some useful information. In our settings, we don't need that forced pruning.

We also take the reviews from a public domain: Restaurant Reviews.

Baseline

Our goal is to compare how our graph based aggregation fares against typical tree-based models. Note that the graph-based model is also equipped with influence weight which needs to be learnt. For tree, the weights are assumed to be strictly determined by depth of a node. Hence leaf node has highest influence and so on. All nodes of a same level have same influence weights.

We also create another baseline to compare, where we augment tree-models with the influence learning, in place of strict hierarchy driven weighing.

Training

For the training phase, we first annotate a small subset of the reviews, currently 100, with the overall sentiment expressed in that review. Now the occurrences of aspects are identified from the nouns used in reviews, by using the distance measure proposed by. Next, we use a window of length 5 to identify an occurrence of a sentiment-bearing word, called lexicon, around the occurrence of aspects. The sentiment polarity of the lexicon is identified using Bing Liu's list. Thus, at a sentence level, we extract out the occurrence of aspects and their corresponding sentiment following a standard approach is taken by many sentiment analysers.

For normal tree based model there is no weight to be inferred. However for both graph and augmented tree model we learn the weights by posing it as bayesian inference. We use the labeled dataset for which we know the aggregated the sentiments, to infer the parameters that are the weights we want to learn. We use weka for this purpose. Weka ingets the data and relation in arff format. Figure 6 shows an exemplary arff file instance.

We limit ourselves only in ten relations as can be seen in the relation section of the file. The reason is as our training dataset is very small many of the relations do not arise in training leading to sparseness which impacts the result negatively.

Results

To compare the models we perform 3-fold, 4-fold and 5-fold cross validations on the annotated dataset. The results are shown in the follwing two tables.

| Model | Review Polarity | Precision - 3 Fold | Precision - 4 Fold | Precision - 5 Fold | Recall - 3 Fold | Recall - 4 Fold | Recall - 5 Fold |

|---|---|---|---|---|---|---|---|

| Graph Based Sentiment Aggregation | Positive | 0.93667 | 0.95 | 0.942 | 0.986667 | 0.985 | 0.984 |

| Neutral | 0.602333 | 0.425 | 0.521333 | 0.42464 | 0.3625 | 0.293212 | |

| Negative | 0.8933 | 0.8825 | 0.86 | 0.93 | 0.93 | 0.932 | |

| Tree Based Sentiment Aggregation | Positive | 0.903333 | 0.905 | 0.902 | 0.836667 | 0.8325 | 0.842 |

| Neutral | 0.583333 | 0.625 | 0.5 | 0.373333 | 0.4375 | 0.24 | |

| Negative | 0.616667 | 0.605 | 0.598 | 0.82 | 0.8325 | 0.81 |

| Model | Review Polarity | Precision - 3 Fold | Precision - 4 Fold | Precision - 5 Fold | Recall - 3 Fold | Recall - 4 Fold | Recall - 5 Fold |

|---|---|---|---|---|---|---|---|

| Graph Based Sentiment Aggregation | Positive | 0.93667 | 0.95 | 0.942 | 0.986667 | 0.985 | 0.984 |

| Neutral | 0.602333 | 0.425 | 0.521333 | 0.42464 | 0.3625 | 0.293212 | |

| Negative | 0.8933 | 0.8825 | 0.86 | 0.93 | 0.93 | 0.932 | |

| Tree with learnt weight | Positive | 0.94321 | 0.94814 | 0.95211 | 0.9529 | 0.936 | 0.9705 |

| Neutral | 0.4945 | 0.5133 | 0.5523 | 0.396667 | 0.3486 | 0.31333 | |

| Negative | 0.91257 | 0.8745 | 0.8674 | 0.9425 | 0.915 | 0.92 |

As can be seen, the graph-based model performs better than standard tree-based model almost always. However, we do not see much difference when we incorporate weight learning in the tree model.

Discussion and Future directions

To summarize, we saw learning influence weight from data can help to better aggregate the sentiments of individual aspects. However the dataset we worked with is very small and hence, lead to sparsity. Each aspect occurs very few number of times and there are many aspects that never occurs. Thus we are forced to restrict ourselves only to ten highly occurring relations. However with more training data available we may gain some more insight and can improve the performances further.

Also apart from learning influence weights, one more generalization that can be studied is identifying the aspects. Currently, we learn only the aspects that are provided in terms of ontologies. But there are many explicit and implicit aspects of a review which can further help understanding the influences. For example the length of the review (small reviews are mostly not useful), authors and their biases etc. They can also be learned from data, although they cannot replace ontology. Rather they can work along with ontology to further improve performance.

Annotated Bibliography

- ↑ A. Agarwal, B. Xie, I. Vovsha, O. Rambow, R. Passonneau. (2011). Sentiment Analysis of Twitter Data. In Proceedings of the Workshop on Languages in Social Media, LSM 2011.

- ↑ J.Polpinij and A. K. Ghose. (2008) An Ontology-based Sentiment Classification Methodology for Online Consumer Reviews. In Proceedings of the 2008 IEEE/WIC/ACM International Conference on Web Intelligence and Intelligent Agent Technology, WI-IAT '08.

- ↑ 3.0 3.1 S. Mukherjee and S. Joshi. (2013). Sentiment Aggregation using ConceptNet Ontology. In Proceedings of the 6th International Joint conference on Natural Language Processing, IJCNLP 2013.

- ↑ Minqing Hu and Bing Liu. (2004). Mining and Summarizing Customer Reviews. In Proceedings of the tenth ACM SIGKDD international conference on Knowledge discovery and data mining, KDD 2004.