Course:CPSC522/Analysis of hierarchical prior for Language modeling

Analysis of hierarchical prior for language modeling

Principal Author: Kevin Dsouza

Collaborators: (Zaccary Alperstein)

Hypothesis

Implementing hierarchical prior improves the performance of the language model

Abstract

The hypothesis proposes that using hierarchy in the prior enhances the language model. Initially, we will look at the drawbacks of RNN language models and introduce the salient features of Variational Autoencoders that counter these shortcomings. Later two different models are discussed, one with and the other without hierarchical prior. These models are implemented in the deep learning framework Tensorflow and conclusions are drawn from the results.

Builds on

This page builds on

Introduction

To start off, let us first understand why we need to form hidden representation of our data. Given data (image or sentence), we want to express it in the form of hidden (latent) variables through a network that can model the relationship between them. These hidden variables store the salient information of the given data. In the domain of Natural Language Processing, this compact representation can be utilized to do a variety of tasks such as classification, reconstruction, and translation. This relation between the data () and the hidden variables () can be modeled by the posterior distribution . In case of sequential data, this distribution can be modeled by using Recurrent Neural Networks like a Long Short-Term Memory (LSTM) or a Gated Recurrent Unit (GRU).

In a regular Recurrent Neural Network Language Model (RNNLM), the hidden representation is a deterministic vector (usually the hidden state of the LSTM) which forms the basis for future operations. The RNN is very good at mimicking the local statistics of the given data and naturally adapts to represent the statistics of the n-grams present in the data sequence. What is therefore lacking is variability in representation, which is a fundamental nature of generative models and human language itself. Here is where the variational models come in. Instead of a deterministic representation of the entire sequence, the variational models drive the representation to a space around this vector from which samples can be drawn later. Figure 1 shows such a Gaussian distribution whose mean () and variance () are parametrized using a neural network.

In order to drive the hidden representation to a distribution, we need to have prior knowledge of the distribution that we want to be close to. This prior distribution of the latent variables P(Z) is usually chosen to be a Normal Gaussian. We want our posterior distribution to be as close to our prior distribution as possible (in the end) so that the samples that we draw later from the prior will be able to reconstruct the data distribution satisfactorily (Let us assume for the sake of explanation that our task is reconstruction of the original data distribution). How is it possible that a sample drawn from a normal Gaussian can regenerate the data distribution? The key here is to understand that any distribution in dimensions can be generated by a set of variables that are normally distributed via a mapping using a complicated function [1]. This complicated function in our case can be a neural network that learns useful latent representation at some layer and then uses this to further generate the data distribution (decoder).

Variational Autoencoders

Variational Autoencoders (VAEs) are a class of autoencoders that aim to reconstruct the data distribution by introducing variability in the intermediate representation. The joint distribution of can be written as , which requires you to draw a sample from the prior and and then sample from the conditional likelihood of . This can be any distribution like a Bernoulli or a Gaussian.

Now the inference in our model i.e. getting good latent variables conditioned on the data samples or the posterior is given by

The issue with this is that the real posterior is intractable as the denominator is a marginal written as

which requires us to compute the integral over all the configuration of latent variables and is thus exponentially complex.

Therefore an approximate posterior is designed to mimic the true posterior and a lower bound to the log marginal distribution is maximized as shown below.

We want to maximize the expectation of the log marginal distribution under the given data distribution . is the actual distribution of the data whereas is the one that we reconstruct. i.e.

Now the log marginal is itself an expectation over the samples of latent variables () taken from the posterior ( because of the fact that we want to obtain hidden variables that can best explain the data.

From Bayes rule we have,

Putting this back in we get,

The issue with this is that the true posterior is intractable as it involves calculating the marginal by integrating out the all latent variables. Therefore an approximate posterior is designed. This results in,

The second term is the KL divergence between the approximate posterior and the prior and the final term is the KL divergence between the approximate posterior and the true posterior .

Therefore we get,

As the true posterior is intractable this cannot be directly estimated. But as the KL divergence is always positive, the third term can be taken to the left and thus lower bound is obtained. This lower bound is called the variational lower bound and forms the basis of optimizing the variational autoencoders.

The RHS is the variational lower bound which has to be maximized. When optimizing we minimize the negative of the lower bound. This can be broken down into two terms, the reconstruction loss (negative log likelihood of data) and the KL loss.

Figure 2 shows the graphical model describing the VAE. The generative function is given by , where are the generative model parameters. The inference function is our approximate posterior given by , where are the inference model parameters.

The question remains whether a single posterior distribution is informative enough to capture the nuances of a lengthy sentence. If the underlying data distribution is fairly complex (highly multimodal), then a regular posterior won’t do a good job of modeling the same. Therefore, it would seem natural for us to move to more complex posterior distributions that can be close to the real intractable posterior such that the variational lower bound is maximized. Towards this end, we can explore a hierarchical posterior distribution which can exploit the natural hierarchy that exists in a sentence. By this, I mean that each word is partly dependent on the word that precedes it and influences the word that follows it, and altogether these words become dependent in the context of the entire sentence. A posterior that can model these rich dependencies would allow the exploration of global sentence context.

Hierarchical Posterior

When the posterior is hierarchical, all the words are dependent on the words preceding them. Adding to this a global latent variable is considered that is dependent on all the words in the sentence in order to give the sentence context. This posterior can be written as

Figure 3 shows this dependence more clearly. It can be seen that every latent word sample () depends on the previous latent word and the current word (). The global latent depends on all the latent words.

Hierarchical Prior

A hierarchical prior will look much like the for hierarchical posterior and has similar properties in the sense that it has richer representational power. This departure from normal Gaussian might help in modeling data that is highly multimodal in nature. The hierarchical prior can be written as

The prior is modeled in such a way that all the latent words depend on the latent words preceding them and the global latent. It should be noted that the global latent variable is considered to be a Normal Gaussian.

It would intuitively make sense to argue that the prior we are assuming should be descriptive enough to represent the data. Would it be acceptable for the prior to be a simple (normal gaussian say) and the posterior to be hierarchical and complex? In which case will the posterior perform better? This is something that I plan to check using experiments and will draw conclusions from the results that I obtain.

Evaluation

The evaluation is conducted on the English Penn TreeBank dataset. Penn Treebank dataset is a large annotated corpus consisting of over 4.5 million words of American English [2]. All the models are run using the Deep Learning framework Tensorflow. A code snippet showing the implementation of unrolled RNN (which an be used for implementing hierarchy) is given below.

def loop_fn(time, cell_output, cell_state, loop_state):

emit_output = cell_output # == None if time = 0

if cell_output is None: # time = 0

next_cell_state = cell.zero_state(self.batch_size, tf.float32)

sample_loop_state = output_ta

mean_loop_state = mean_ta

sigma_loop_state = sigma_ta

next_loop_state = (sample_loop_state, mean_loop_state, sigma_loop_state)

else:

word_slice = tf.transpose(tf.reshape(tf.tile(word_pos[:, time - 1], [self.hidden_size]),[self.hidden_size, self.batch_size]),perm=[1, 0])

next_sampled_input = tf.multiply(cell_output, word_slice)

# reparametrization

z_concat = tf.contrib.layers.fully_connected(next_sampled_input, 2 * self.hidden_size)

z_concat = tf.contrib.layers.fully_connected(z_concat, 2 * self.hidden_size,activation_fn=None)

z_mean = z_concat[:, :self.hidden_size]

z_mean = z_mean

z_log_sigma_sq = z_concat[:, self.hidden_size:self.hidden_size * 2]

z_log_sigma_sq = z_log_sigma_sq

eps = tf.random_normal((self.batch_size, self.hidden_size), 0, 1, dtype=tf.float32)

z_sample = tf.add(z_mean, tf.multiply(tf.exp(z_log_sigma_sq), eps))

z_sample = tf.multiply(z_sample, word_slice)

z_mean = tf.multiply(z_mean, word_slice)

z_log_sigma_sq = tf.multiply(z_log_sigma_sq, word_slice)

if train:

next_cell_state = z_sample

else:

next_cell_state = z_mean

sample_loop_state = loop_state[0].write(time - 1, next_cell_state)

mean_loop_state = loop_state[1].write(time - 1, z_mean)

sigma_loop_state = loop_state[2].write(time - 1, z_log_sigma_sq)

next_loop_state = (sample_loop_state, mean_loop_state, sigma_loop_state)

next_cell_state = cell_state

next_input = tf.cond(time < self.max_char_len, lambda: _inputs_ta.read(time),

lambda: tf.zeros(shape=[self.batch_size, self.input_size], dtype=tf.float32))

elements_finished = (time >= (self.max_char_len))

return (elements_finished, next_input, next_cell_state, emit_output, next_loop_state)

with tf.variable_scope('encoder_rnn', reuse=reuse):

outputs_ta, final_state_out, word_state = tf.nn.raw_rnn(cell, loop_fn)

word_state_out = word_state[0].stack()

mean_state_out = word_state[1].stack()

sigma_state_out = word_state[2].stack()

outputs_out = outputs_ta.stack()

return word_state_out, mean_state_out, sigma_state_out

With hierarchical prior and hierarchical posterior

The first experiment I conduct is with a hierarchical prior, with only the global latent being a Normal Gaussian.

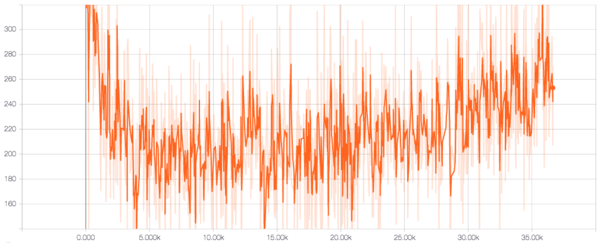

Figure 4 shows the reconstruction loss, which is the negative log likelihood of the data. It can be seen that the reconstruction loss decreases up to 5000 time steps after which there is a steady increase. This is because during training a concept called KL annealing is implemented. This involves zeroing the KL loss and gradually increasing its weight to 1. So until 5000 time steps, the network focuses entirely on reconstruction and hence the decrease. But later, the KL term kicks in we see the reconstruction suffering.

From Figure 5 we can see that the KL loss starts high and rapidly decreases. This is characteristic of a VAE where it tries to match the posterior with the prior and reduce the KL loss. In our case, we have two RNNs with high representational power mimicking the prior and the posterior, which might be the reason why they match up so soon. The KL loss for the global latent shows a similar behavior as seen in Figure 6. Figure 7 shows the histogram of the global latent variables and it is seen that most of the latent variables are close to zero.

With simple prior and hierarchical posterior

In this experiment, a Normal Gaussian prior is used for all the words and the global latent representation.

It can be seen from Figure 8 that the reconstruction loss is much lesser than the one with the hierarchical prior. Also, after the KL term is kicks in the reconstruction loss doesn't suffer as much as the previous case.

The global latent loss in Figure 9 is similar to the one observed with the hierarchy in the sense that it rapidly goes to zero after the KL term starts. Figure 10 shows the histogram of the active global latent variables and it is observed that more of the global latent variables are on when compared to the previous case. The histogram can be read as follows: The y-axis denotes the time steps, the x-axis denoted the bins and the z-axis denotes the number of variables falling into that particular bin. With hierarchy, it was seen that these variables were all cluttered around zero, but with independent prior, we see that the variables are more spread out. This means that more information is available in the latent variables and this should explain the improvement in the reconstruction even though the posterior matches the prior quite well.

It is surprising that we see better performance with an independent prior than a hierarchical prior. This can be partly be explained by the fact that the prior is made weak and not given much representation power to easily match the hierarchical posterior thus keeping many latent variables active in the process which helps in reconstruction and diversity. It could also be that the hierarchical prior needs to be tuned in a better way to work with the present model. Because of the deep nature of the model, parameter tuning affects the performance of the model quite a bit and therefore needs to be given careful consideration. Also, other techniques like KL term reweighting need to be tried and tested.

Conclusions

Variational models can be better language models because of their inherent diversity supporting nature. This brings with it the burden of additional parameter tuning which needs to be carried out carefully so that the model performs as desired. We discussed two models and saw their performance in Tensorflow. The model with the hierarchical prior performs worse than expected, not showing a satisfactory reduction in the reconstruction loss. On the other hand, the simple independent prior performs better and can be useful in getting independent word representations in a sentence context.

Annotated Bibliography

- ↑ A tutorial on Variational Autoencoders

- ↑ Marcus, Mitchell P., Mary Ann Marcinkiewicz, and Beatrice Santorini., 1993, "Building a large annotated corpus of English: The Penn Treebank.", Computational linguistics 19.2 (1993): 313-330.

Further Reading

|

|