1.06 - Expected Value

For an experiment or general random process, the outcomes are never fixed. We may replicate the experiment and generally expect to observe many different outcomes. Of course, in most reasonable circumstances we will expect these observed differences in the outcomes to collect with some level of concentration about some central value. One central value of fundamental importance is the expected value.

The expected value or expectation (also called the mean) of a random variable X is the weighted average of the possible values of X, weighted by their corresponding probabilities. Informally, the expectation of a random variable X is the average value that we would expect to see after repeated observation of the random process. Put another way, the expectation is the long-term average of the realized values of a random variable after repeated observation of the random variable.

| Expected Value of a Discrete Random Variable |

|---|

| The expected value, , of a discrete random variable X is the weighted average of the possible values of X where each possible value of X is weighted by its corresponding probability:

where N is the total number of possible values of X. |

Note the following:

- Do not confuse the expected value with the average value of a set of observations: they are two different but related quantities. The average value of a random variable X would be just the ordinary average of the possible values of X; that is, no possible value of X receives any special weight. Naturally, this ordinary average is given by . The expected value of X is a weighted average, where certain values get more or less weight depending on how likely or not they are to be observed. A true average value is calculated only when all weights (so all probabilities) are the same.

- The definition of expected value requires numerical values for the xk. So if the outcome for an experiment is something qualitative, such as "heads" or "tails", we could calculate the expected value if we assign heads and tails numerical values (0 and 1, for example).

Example: Test Scores

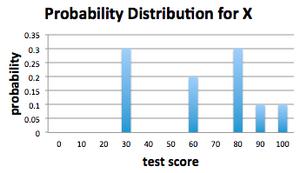

Recall the test score example from Sections 1.03 and 1.04. We supposed that in a class of 10 people the grades on a test are given by 30, 30, 30, 60, 60, 80, 80, 80, 90, 100. A test is drawn from the collection at random and the score X is observed. What is the expected value of the random variable X?

The expected value of the random variable is given by the weighted average of its values:

Notice that 64 is not actually a possible value for the random variable X. Nevertheless, this expectation makes sense if we remember that what we have really calculated is the long-term average of repeatedly drawing a test score from this collection. If we drew a test score at random from this collection 100 times (remembering to replace the selected test each time so that we never alter our collection of tests) and then averaged all the observed outcomes, this average value would be very near the expected value of 64.

Expectation as a Measure of the Center of a Distribution

Another informal way to think of the expectation of a random variable is to notice that it gives a measure of the center of the associated distribution. For our test score example, the PMF of the randomly selected test score X is shown below.

Notice that the expected value of our randomly selected test score, , lies near the "center" of the PMF. There are many different ways to quantify the "center of a distribution" - for example, computing the 50th percentile of the possible outcomes - but for our purposes we will concentrate our attention on the expected value.